I spent the past two days at the Open Compute Project (OCP) U.S. Summit 2017, in Santa Clara, California. The HUGE news is that Microsoft has ported Windows Server to ARMv8 chips from Qualcomm and Cavium (but, for now, only for use within Azure).

Last year, ASSET joined the Open Compute Project, and we have watched as participation in the initiative has swelled, growing from 88 to 195 members. There is something inspiring about watching the engineering community come together and work altruistically for the benefit of the industry. In fact, the tagline this year, “Open Hardware. Open Software. Open Future.” describes it perfectly, I think.

The big news of the show came during the Microsoft keynote speech by Kushagra Vaid, general manager of Azure hardware infrastructure. It was unveiled that Microsoft had ported Windows Server over to ARM 64-bit server chips from Qualcomm and Cavium. But, significantly, this is currently intended for use internally within Microsoft Azure, and Microsoft did not provide a forecast as to when it might be available to the general market.

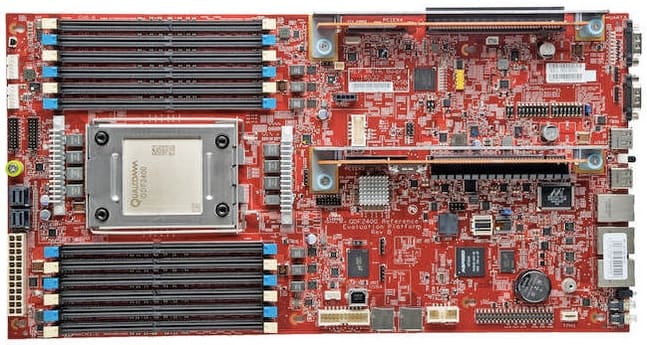

Strategically, this is a great boon to Qualcomm and Cavium, and an advantage to Microsoft itself. Both chip vendors get to work with a “friendly” customer to go through the internal evaluation and testing processes needed to optimize Microsoft toolchains and products on their devices. For instance, the Qualcomm Centriq has support for up to 48 cores, is based upon a 10nm FinFET process, and is scheduled to ship in volume in the second half of this year. The Cavium ThunderX 2 has 54 cores on a 14nm process, but with support for NUMA scaling over two sockets with the Cavium Coherent Processor Interconnect (CCPI). The specs on these chips might actually give them a leg up over Intel for a period of time. And Microsoft Azure gets an exclusive on the power/performance of the two devices for a while, versus its competitors.

A picture of the Qualcomm reference board is below:

So, maybe this is a second opportunity for the ARMv8 chip suppliers to break into the server market. The momentum seems real this time.

Other notable items from the show:

Microsoft’s Project Olympus HGX-1 design supports eight of the latest “Pascal” generation NVIDIA GPUs and NVIDIA’s NVLink high speed multi-GPU interconnect technology, and provides high bandwidth interconnectivity for up to 32 GPUs by connecting four HGX-1 together over PCIe. This looks like a platform simply ideal for machine learning.

NetApp has unbundled its ONTAP product from its own hardware, and made it available to run on open source Open Compute Project hardware. This has a big implication as storage companies move away from being hardware-centric.

Jason Waxman, Intel’s VP/GM of the Datacenter Solutions Group (DSG), announced that Intel has open-sourced the hardware for its “Adams Pass” reference board, which uses four Xeon Phi (Knights Landing) mega-chips for highly parallel workloads.

Vijay Rao, Facebook’s Director of Technology Strategy, shared some absolutely amazing statistics about Facebook’s infrastructure. The one that stuck with me was, given a world population of 7.5 billion, there are 1.86 billion users on Facebook each month. I thought that the scale of this utilization is astonishing.

Brian Dodds and Craig Ross gave an excellent overview of Facebook’s hardware lifecycle at scale, and the need to Monitor, Alarm, Provide Design Feedback, and Remediate issues in the datacenters. They gave an excellent overview of some real-life problems in the field, and the need to “make tooling a first-class citizen”. It was a good reminder that, when it comes to hardware, at large scale, everything fails – you want to minimize the impact with tooling.

The last presentation really resonated with me, because that is what ASSET is all about – providing excellent tooling for the early detection and remediation of hardware and software problems. In fact, our At-Scale Debug initiative, which you can see on our website under ScanWorks Embedded Diagnostics, or SED, serves precisely that purpose. SED connects a circuit board's service processor (such as a BMC) pins to the JTAG debug port of a CPU, and embeds run-control firmware into the target for debugging at-scale. So, it is possible to remotely debug a crashed or hung system, and even run CScripts (in the Intel case) remotely on the target. This is a most amazing capability which is very well-suited to large server datacenter deployments.